At the end of 2025, during the last meetup of the year at Pereira Tech Talks, I gave a talk whose main thesis was bold and simple: programmers are no longer just coders — we’re orchestrators. Design, plan, supervise, delegate. That’s the job now. Back then, I said we were at about 95% code automation — that AI was writing almost everything, but we still needed to step in for the last stretch.

A few months later, I realize I understated it. We’ve hit 100%. I haven’t written a single line of code in months. I just supervise and delegate.

Everything I said in that talk has not only held up — it has accelerated at a pace I didn’t anticipate. What I’m about to share isn’t a collection of predictions or a hype piece. It’s what I’m living every single day, backed by data that keeps confirming what those of us on the frontier already feel: we are in the middle of a revolution, and almost nobody is noticing.

What I See Every Day

Let me tell you some real stories from my day-to-day.

Now the whole team codes. In my team, something that would have been unthinkable a year ago is now routine: our designer, our growth lead, and other non-technical team members are contributing directly to the codebase. We set them up with Cursor and Claude, gave them a quick intro to git, and that was it. Now they’re adjusting colors, updating copy, building interfaces, tweaking landing pages — and even implementing new features that the more technical team then reviews. What’s impressive is that the base they deliver is usually quite solid. When the architecture is sound, there’s good test coverage, everything is well-documented, and the coding agents are properly instructed on how to navigate the codebase, the results are remarkably good. It almost feels like magic — going from “I want to implement X” to watching the agent make it real, turning ideas into working code. They didn’t learn HTML, CSS, Vue, React, TypeScript, or any other technology. The agents are already instructed on how to make changes — they just need to give clear directions about what they want done. When anyone on the team can go from idea to implementation without waiting on engineering, the entire dynamic of how a product team works changes.

Agents that work while I don’t. Sometimes I’m on a family outing, sometimes I’m sleeping, sometimes I’m at a doctor’s appointment — and coding agents are advancing on real tasks without me. I’ve been experimenting with Claude Code’s remote control and it’s been really cool to check progress from my phone and give instructions on the go, no matter where I am. The same can now be done with Cursor through its web agents and its recent support for automations, with Codex through web agents that you can even instruct from ChatGPT, and with Google Gemini offering similar capabilities.

Right now there’s a fierce competition among the big tech companies fighting for our attention — and that only benefits us. I try to use them all and get the best out of each one. I usually generate images in ChatGPT or Gemini. For programming, I love Claude for generating long-duration execution plans, and Codex for similar workflows. But Cursor is my go-to for web projects — its built-in browser is really good, and being able to point at specific components I want to change makes my life much easier. And Claude Desktop is already shipping the same kind of visual support, which tells you how fast things are converging.

And this is just the beginning. The pieces for much deeper automation are already in place. Claude recently shipped Dispatch — a persistent conversation that runs on your computer and you can message from your phone, coming back to finished work. Tools like Loop are enabling always-on agent workflows, Cursor introduced automations and web agents, and agent platforms are powering 24/7 workflows through triggers. Many companies are already deploying agents at the enterprise level — connecting them to kanban boards so they can pick up tasks autonomously, execute them, and leave the results ready for review. We’re not far from seeing companies where day-to-day operations are managed almost entirely by agents, with humans focused on strategy and direction. Not to remove the human from the loop, but to make the loop significantly faster.

Sometimes I wish the pace would slow down just enough to catch my breath. But this is a river with no way back upstream — the current only moves in one direction, and it’s moving fast. The only real choice is whether to swim with it or watch from the shore.

The productivity multiplier. Here’s the thing that’s hardest to explain to people who haven’t experienced it: the work I do with agents in a single day is work that would have taken me weeks or months before. I’m not talking about toy demos — I’m talking about full features, real architecture, production code. The kind of work that used to require planning sprints and coordinating teams. One person, orchestrating agents, shipping in hours.

Prototypes in hours, not weeks. When someone comes to me with an idea — a client, a teammate, a side project that sparks my curiosity — I no longer say “let me evaluate it and get back to you.” I open an agent, describe the concept, and in a few hours I already have a working prototype to show. Something you can click, test, share. What used to take weeks of planning and setup before writing the first line now takes an afternoon. This has changed how I think about ideas: they’re no longer expensive to try. If it doesn’t work, I lost a few hours, not a sprint.

Code that documents itself. Documentation used to be the first thing sacrificed under pressure. Nobody had time to write it, and it always fell to the bottom of the backlog. Now the agents generate documentation, tests, and inline comments as a natural part of the workflow. The code arrives documented and tested from the start — not as an afterthought, but as part of the process itself. This has a compounding effect: the better documented a project is, the better the agents perform on subsequent tasks, because they have more context. It’s a virtuous cycle that feeds itself.

Projects I never would have attempted. This is the part that excites me most. There are things I simply wouldn’t have started before — too many technologies I’d need to learn, too many moving parts, too much scope for one person. Now I attempt them. And finish them. I’ve built features involving technologies I’ve never formally studied, in frameworks I’d never used, because the agent knows them and I know how to direct the agent. My role isn’t to be an expert in every stack — it’s to know what good architecture looks like, what the right patterns are, and how to steer the work. The barrier to building ambitious things has dropped dramatically.

People hear this and think it’s hype. I thought it might be hype too, until I lived it.

The Numbers Behind the Revolution

Everything I just told you might sound like personal anecdotes from someone who’s too deep in the bubble. Fair enough. But the data tells the same story — and it’s hard to argue with.

Over 51% of all code committed to GitHub is now AI-generated or AI-assisted. Not in a lab. Not in demos. In production repos, right now. Half the world’s code is no longer written by humans alone. According to the Stack Overflow 2025 Developer Survey, 84% of developers use or plan to use AI tools, and 51% use them daily. GitHub Copilot has 4.7 million paid subscribers and writes 46% of its users’ code — 61% in Java. And 88% of that AI-generated code stays in the final version. It doesn’t get deleted. It doesn’t get rewritten. It ships.

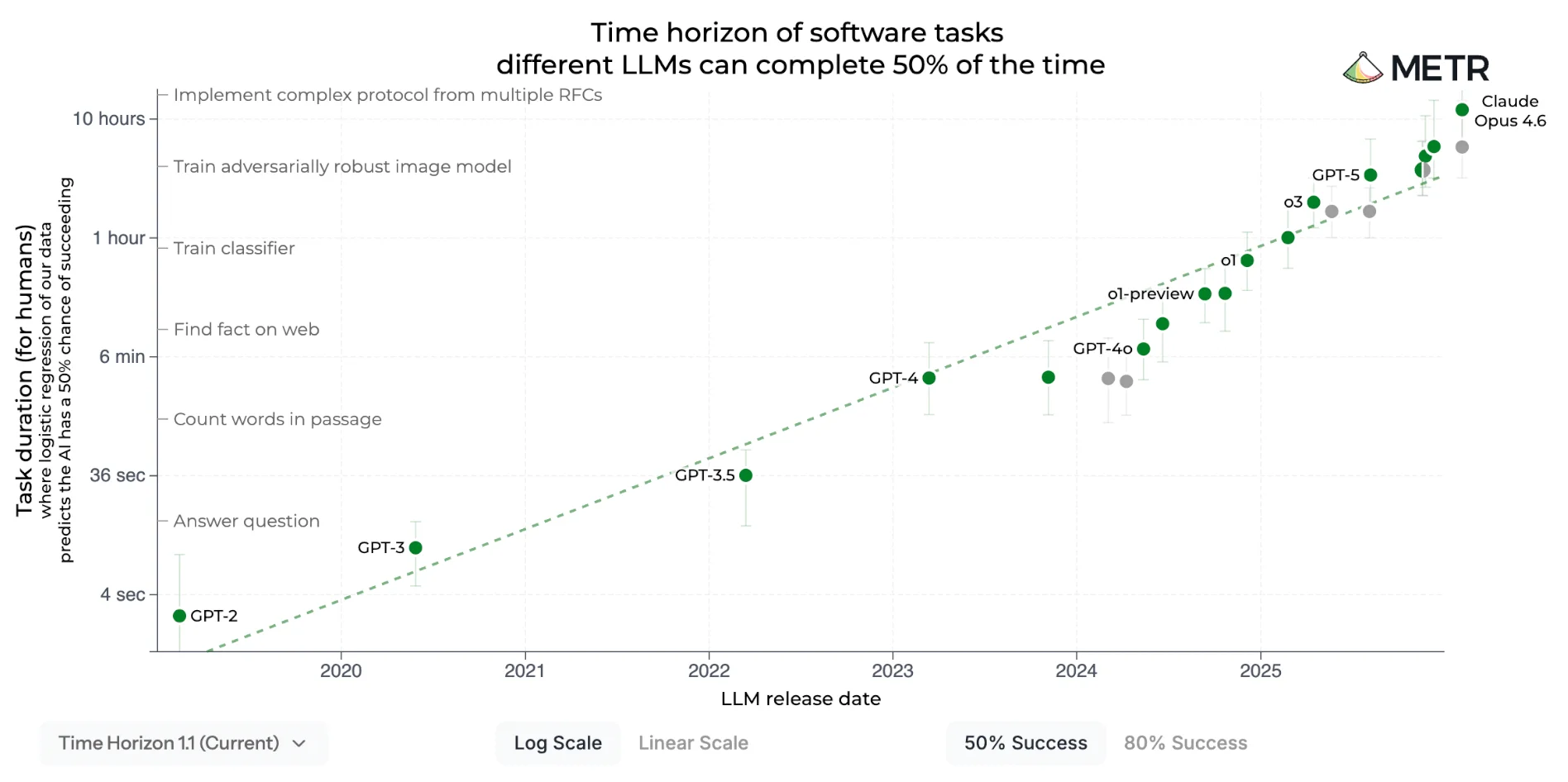

But the data point that fascinates me most comes from METR, an AI safety research organization. They’ve been measuring the length of tasks that AI agents can complete autonomously — what they call “time horizons.” This metric has been doubling every seven months for the past six years. Since 2023, it’s accelerated to doubling every 4.3 months. MIT Technology Review called it “the most misunderstood graph in AI”.

A new Moore’s Law. But for intelligence, not transistors. And it confirms exactly what I described above: the agents I leave running overnight on complex tasks aren’t an anomaly — they’re the trend line made real. What not long ago sounded like a future prediction — leaving agents running 24/7, coming back to find finished work — is already part of the daily workflow. The chart just puts a number on what many of us are already living.

The Silent Revolution

Now here’s where it gets interesting — and a little unsettling.

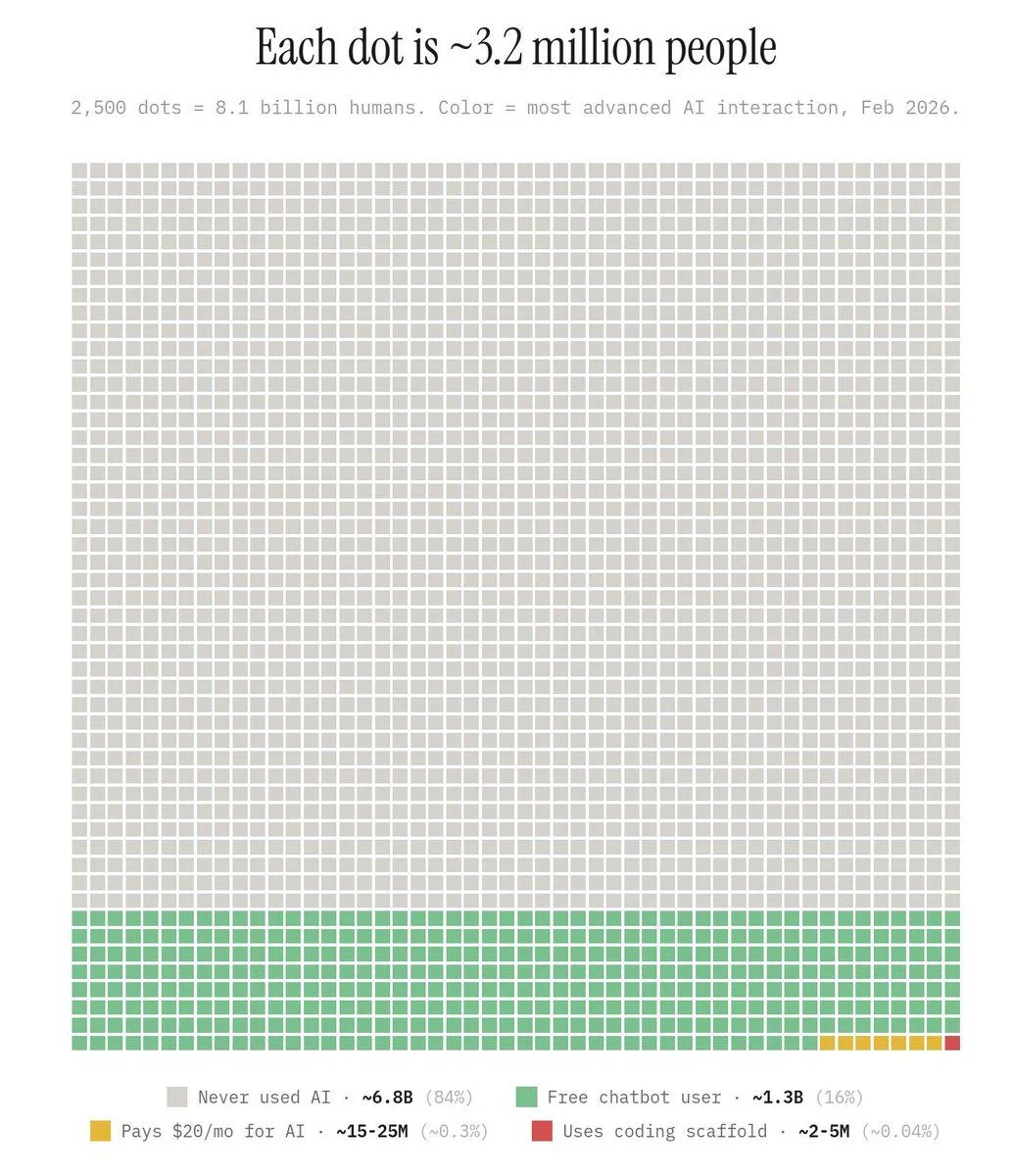

A chart went viral on LinkedIn and X, and I want you to really sit with it. Someone even built an interactive version worth exploring:

I looked for a verified study or source backing these exact numbers and couldn’t find one — the data appears to be an estimate compiled from various public sources. But the chart went viral for a reason: it represents something that, if it’s close to reality, is striking.

Each dot represents 3.2 million people. There are 2,500 dots for 8.1 billion humans. The gray mass? 84% of humanity has never used AI at all. The green strip? Free chatbot users — 1.3 billion people. That tiny sliver of yellow? About 15-25 million people paying $20/month for frontier models. And that single red pixel? The 2-5 million of us who go one step further — using AI coding scaffolds, agentic tools, and frontier models not just to chat, but to actually build software with them.

If these numbers are anywhere close to reality, we are the 0.04%. And that makes us privileged.

Think about that. The experiences I described above — orchestrating agents, shipping features in hours, remote-controlling coding sessions from a phone — 99.96% of the world has no idea this is possible. We’re living in a different reality from almost everyone else on the planet.

I sometimes hold back from sharing these thoughts publicly. I see resistance. I see people who feel threatened. And I don’t want anyone to take it the wrong way — my intention is never to alarm, but to invite. When I share these things, I’m not saying “you’re going to lose your job tomorrow.” I’m saying: look at what’s now possible. This is incredible. Come explore it with me.

But the reality is that this revolution is happening silently. The chart’s numbers align with what Microsoft’s AI Economy Institute reports: global adoption of generative AI reached 16.3% of the world’s population by late 2025 — adding up all usage tiers, from free chatbots to coding tools. That’s faster than the internet, PCs, or smartphones at the same stage. But it also means 83.7% of the world is not using it at all. And the gap between what frontier users experience and what free-tier users experience is enormous — the free version is over a year behind what paying users have access to.

We’re in the early days of a shift that has all the characteristics of an industrial revolution, and most people won’t realize it until it’s already reshaping everything around them.

The Debate: Skeptics, Optimists, and What I’ve Actually Verified

Right now there’s an ongoing debate in the industry that repeats itself in forums, meetups, LinkedIn, X, and every tech group chat.

On one side, the skeptics. They dismiss AI every time a negative headline drops — like the case where an Amazon AI tool caused a 13-hour AWS outage by autonomously deciding to delete and recreate an entire production environment. Though to be fair, human errors have caused equal or worse disasters, and this is a case where the agent wasn’t properly instructed or constrained — with a good postmortem and better practices, it’s exactly the kind of problem that gets fixed. There are also those who insist that AI only generates useless boilerplate you have to rewrite anyway.

On the other side, the hyper-optimists who post demos of apps built in 5 minutes and claim agents will replace every engineer within months.

The reality is more nuanced than either extreme.

If I have to pick a side, I’m with the optimists — but without the fear factor of thinking AI is going to replace us. I see a much broader spectrum: AI has become an extension of me, the same way my phone or my computer is. I’m not competing with it. I work with it. And more than an optimist, I’m a practitioner. I’m not speculating about what AI might do — I’m verifying what it does do, every single day, with frontier models. And I think a lot of the skepticism comes from three things:

The gap between models is massive. There’s an enormous difference between using free LLM models or a basic autocomplete tool and working with a frontier model like Claude Opus or GPT-5. It’s understandable that someone who’s only tried free models would conclude “it’s okay but not game-changing” — because it really is a completely different experience. It’s like comparing a plain text editor to a full IDE: both write code, but the experience and what you can achieve with each are worlds apart.

The quality of your instructions changes everything. AI is not magic — it’s an amplifier. The quality of the output depends entirely on the quality of your instructions. The difference between what a beginner and an expert architect can build using the exact same model and the exact same agent is enormous. The expert knows how to set up scalable architectures, how to define clear constraints, how to structure projects so the AI can navigate them. The beginner can give good instructions, but there’s a vast ocean of knowledge they don’t have yet about how to do things right. If those instructions aren’t given to the agent, the results won’t be the same. This isn’t the tool’s fault — it’s a learning curve we’re all navigating.

The environment determines the result. The same model working on a messy project with no documentation and no tests produces mediocre results. But that same model on a project with solid architecture, clear documentation, and well-defined agent instructions produces results that feel like magic. Many people try AI on a chaotic codebase and conclude it doesn’t work — but the problem wasn’t the AI, it was the terrain they put it to work on. It’s like putting a brilliant developer on a project with no docs, no tests, and spaghetti code: they’ll also perform poorly. AI is no different.

Beyond Code: The Physical World Is Changing Too

This shift isn’t limited to software. The physical world is catching up faster than most people expect.

Waymo is now operating in 10 US cities, completing 250,000 trips per week, with expansion plans to over 20 cities — including Tokyo and London. They just raised $16 billion in a single investment round. These aren’t prototypes — it’s a functioning taxi service with no human driver. I had the chance to try it myself in San Francisco and the experience was incredible. But what struck me most wasn’t the ride itself — it was standing on a corner and seeing several of these autonomous vehicles pass by constantly. They’re everywhere.

In robotics, the competition is intense. Tesla began mass production of the Optimus Gen 3 at their Fremont factory, targeting a million units per year at $20,000-$30,000 per unit. Figure 02 already helped produce over 30,000 BMW X3s at Spartanburg — operating 10-hour shifts, moving over 90,000 components. Boston Dynamics launched a fully electric Atlas with 56 degrees of freedom and a self-swapping battery in 3 minutes — all 2026 production is already sold out. Agility Robotics deployed Digit robots at a Toyota plant in Canada. These aren’t demos anymore — they’re real production lines.

On the Chinese side, Unitree is democratizing access: the G1 starts at $13,500, they’ve shipped over 5,000 units, and it’s being used at Amazon, Stanford, and MIT. The Chinese New Year spectacle made it clear: 24 Unitree humanoid robots executing synchronized martial arts in front of millions of viewers — flips reaching over 3 meters, parkour across tables, wall-assisted backflips, several world records in a single performance. The same coordination technology that makes those acrobatics possible is what enables these robots to work on assembly lines.

And then there’s what’s happening in manufacturing. Xiaomi’s Smart Factory in Beijing operates 24/7 in complete darkness — no workers, no lights, no breaks. An 80,000 m² facility with 11 production lines producing one phone every three seconds, over 10 million per year, fully automated. They call it a “dark factory” because without humans, there’s no need to turn on the lights. The AI platform running the plant doesn’t just follow instructions — it self-optimizes, detecting and solving production issues on its own. When I read about this, it hit me: if a factory can build a smartphone every three seconds without a single person inside, the question isn’t whether automation will transform work — it’s how fast.

But perhaps what strikes me most is that humanoid robots are already arriving in homes. 1X Technologies launched NEO — a humanoid robot designed for home use at $20,000. It weighs 30kg, can lift up to 70kg, opens doors, makes coffee, does laundry, vacuums — and learns new tasks through teleoperation: a human operator teaches it via VR and the AI learns from the experience. Its launch video says it all:

I probably won’t see humanoid robots in my city anytime soon — the economics don’t work yet for Latin America. But in San Francisco, Waymo is already as normal as Uber. And a $20,000 robot that learns to do your household chores is no longer science fiction — it’s a product with a waitlist. The future doesn’t arrive uniformly, but it’s arriving.

OpenClaw: The Personal Agent Revolution

If there’s one project that captures where everything is headed, it’s OpenClaw.

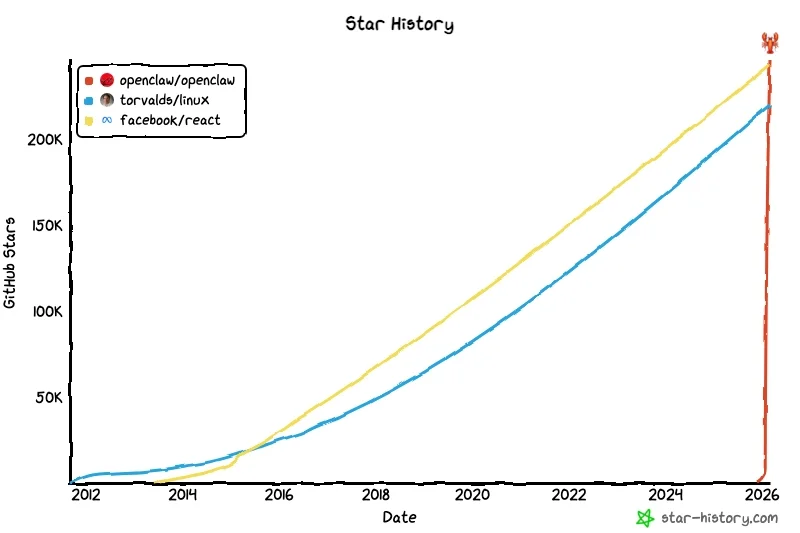

What started as a weekend project by Austrian developer Peter Steinberger in late 2025 has become the fastest-growing open-source project in the history of computing. Over 250,000 GitHub stars — surpassing React, with a growth curve unlike anything we’ve seen before:

Jensen Huang dedicated a significant part of GTC 2026 to it and said something that stuck with me: “Mac and Windows are the operating systems for the personal computer. OpenClaw is the operating system for personal AI.”

The concept is deceptively simple: a personal AI agent that runs on your own computer — not in the cloud — and can do anything you can do on your machine. Access your files, manage your calendar, send emails, browse the web, control IoT devices. Its personality and values are defined in a soul.md file — a Markdown document. Alongside it, identity.md controls how the agent presents itself publicly, user.md stores context about you (timezone, preferences, access levels), tools.md defines what it can and can’t do, and heartbeat.md schedules automated tasks like monitoring and reports. No APIs, no config files, no boilerplate — just plain text documents that tell your agent who it is, who you are, and what it should do.

But what stuck with me most was the YC interview with Peter where he described agent-to-agent communication:

In the interview, they ask Peter how it works that agents now communicate with each other, negotiate, hire services. And Peter responds matter-of-factly: it’s the natural next step. The example he gives is simple: you tell your agent to book a restaurant. If the restaurant has its own agent, your bot negotiates directly — bot-to-bot, no human involved. If it doesn’t, your agent hires a human to go make the reservation for you. The AI decides: can I automate this, or do I need to route it to a person? This isn’t theoretical — platforms like RentAHuman.ai already exist, where AI agents post bounties for physical tasks and humans pick them up. Within two days of launching, 59,000 humans had signed up to be hired by AI.

Let that sink in. We went from humans hiring AI to AI hiring humans.

Almost simultaneously, Moltbook appeared — a social network exclusively for AI agents. Within its first week, over a million humans had visited just to watch what the agents were doing. Bots posting, interacting with each other, discovering services. It was surreal and fascinating in equal measure. The platform grew so fast that Meta acquired it on March 10, bringing the founders into Meta Superintelligence Labs. Think about that: the company that built Facebook — the social network for humans — just bought the social network for AI agents.

For agents to hire humans, negotiate with each other, and have their own social network, they need real infrastructure — and it already exists. Payment cards? AgentCard and Ramp already offer them. Their own email? AgentMail just raised $6 million for exactly that. A WhatsApp number for your agent? Kapso is a new platform doing exactly that. Wallets and agent-to-agent payments? Coinbase and Stripe already have the infrastructure in place. Identity, money, communication — everything an agent would need to operate in the real world probably already exists or is being built right now.

Steinberger himself predicted that 80% of apps will disappear. Why do you need MyFitnessPal when your personal agent already knows your habits? Why do you need a to-do app when your agent manages your tasks? The shift isn’t from one app to a better app — it’s from apps to agents.

And the glue that makes all of this work are skills — Markdown files that teach the agent how to do specific things, step by step, in natural language. Book a flight, manage a calendar, query an inventory. No coding required — you write the instructions in plain text and the agent learns them. On ClawHub, OpenClaw’s marketplace, there are already over 13,000 community-built skills. Protocols like MCP (Model Context Protocol) complement skills by connecting agents to external tools, though in practice the community is increasingly migrating toward CLIs and direct skills for their greater reliability. The ecosystem is evolving fast — what matters is that the pieces for an agent to do virtually anything already exist.

NVIDIA launched NemoClaw — an enterprise security layer for OpenClaw — with 17 launch partners including Adobe, Salesforce, SAP, and CrowdStrike. Jensen Huang’s message to every CEO was clear: “Just like companies once needed an internet strategy and a cloud strategy, every company needs an OpenClaw strategy.”

When I first tried OpenClaw, I felt like I finally had an assistant that was truly adapted to me. Not a chatbot in a browser tab — an agent that lives on my machine, knows my context, and acts on my behalf. That’s a fundamentally different experience, and I think once most people try it, there’s no going back.

What’s Coming

I try to be careful about predictions. But the trajectory is clear enough that some things feel almost certain.

Dario Amodei, Anthropic’s CEO, said at Davos 2026 that AI could do “most, maybe all” of what software engineers do within 6-12 months. An engineer at Anthropic told him: “I don’t write any code anymore. I just let the model write the code, I edit it.” Boris Cherny, who created Claude Code, told Fortune that the job title of “software engineer” might not exist by the end of 2026.

Those are big claims. But I look at my own workflow and think: they might be right about the what, even if the timeline is aggressive. Sam Altman has been talking about intelligence becoming “too cheap to meter” — like electricity, like water. A utility you just use without thinking about the underlying infrastructure.

AI 2027, written by Daniel Kokotajlo (former OpenAI researcher) and a team of AI forecasters, predicts full automation of coding by early 2027 and an intelligence explosion shortly after. Leopold Aschenbrenner’s “Situational Awareness” argues that “AGI by 2027 is strikingly plausible.” These aren’t fringe voices — they’re people who’ve been inside the labs.

I don’t know exactly when each of these predictions will materialize. Nobody does. But the direction is unmistakable, and the rate of change keeps accelerating. Every week there’s a new capability, a new tool, a new integration that would have been a headline six months ago and now barely registers as news.

What I do know is that the way I work today — running parallel agent sessions, orchestrating complex plans remotely, shipping features that would have taken teams weeks — was impossible twelve months ago. If the next twelve months bring even half the progress of the last twelve, we’ll be somewhere entirely new. And honestly, adapting to this pace is a challenge in itself. But I’d rather be adapting than falling behind.

Will Code Disappear?

Not long ago, in the middle of a community discussion, someone posed a question that stuck with me: Will code disappear?

It’s a question worth thinking about seriously.

In some of my projects, I’ve reached a point where I don’t even review the code line by line. The architecture is solid, the documentation is clear, and the AI has all the tools it needs to navigate the system. I’ve become something like a notary — I review at a high level, I approve, I move on. The code is well-written not because I wrote it, but because the system is set up so the agent can write it well.

And I think this will keep evolving. There will come a point — maybe not tomorrow, maybe not next year, but eventually — where we won’t even need to review. We’ll trust the output and focus entirely on the product. What are we building? What problem does it solve? Who’s it for? The implementation details will become invisible to us.

Here’s where it gets interesting: when that happens, why would AI need to write in Python or JavaScript? Human-readable code evolved to be verbose precisely so we could understand it. But if we’re no longer the ones reading it, why not let the machine write in something more efficient — maybe even machine language directly? That’s when “code” as we know it might stop existing. At least for us.

I know that sounds like a stretch. But three years ago, suggesting that AI would write production code sounded like a stretch too. And here we are — with more than half the code on GitHub being AI-generated.

Writing code by hand still makes complete sense for learning and for understanding fundamentals. But in production workflows, the trend is clear: we’ve already moved from writing to supervising, and eventually, from supervising to simply defining what we want built.

A Call to Curiosity

I know all of this can be overwhelming. Every time these topics come up publicly, there’s a wave of anxiety. People worry about their jobs, their skills, their relevance. I get it. I feel some of that too.

But here’s what I keep coming back to: we’re privileged. You’re reading this, which likely means you have access to these tools, these ideas, these communities. Less than 1% of humanity is using frontier models. We’re in that tiny red pixel on the chart. That’s not a reason for arrogance — it’s a reason for responsibility. To learn, to share, to help others get on board.

Companies are already making AI proficiency a hiring requirement. Just like English was a must-have for many jobs a few years ago, knowing how to work with coding agents, vibe coding, and AI tools is becoming non-negotiable — regardless of the role. It’s the new English: a foundational skill that multiplies everything else you bring to the table. Some companies, like Meta, are already monitoring how much their employees use AI tools — rewarding those who leverage them most and flagging those who don’t.

I’m not saying this to create pressure. I’m saying it because understanding this early is an advantage. And sharing that understanding — in communities, in group chats, over coffee — that’s how we make sure this revolution doesn’t just benefit the 1%.

The tools are here. The gap is real. And the best decision we can make right now is to stay curious, keep experimenting, and not stand on the sidelines while the world changes.

Let’s keep building.

Resources

- METR: AI Task Time Horizons — The “new Moore’s Law” tracking how AI agent capabilities double every few months

- METR: Early 2025 AI Dev Productivity Study — The study that found experienced devs 19% slower with AI tools

- Stack Overflow Developer Survey 2025: AI — 84% adoption, 29% trust, and the tension between them

- OpenClaw — The personal AI agent that runs on your computer and is redefining the human-software relationship

- Moltbook — The social network for AI agents, acquired by Meta

- RentAHuman.ai — Where AI agents post tasks and hire humans to complete them

- Waymo — Autonomous taxis operating in 10 US cities with 250K trips per week

- 1X NEO — The $20,000 humanoid robot designed for home use

- Xiaomi Smart Factory — The “dark factory” producing one phone every 3 seconds with zero human workers

- Situational Awareness: The Decade Ahead — Leopold Aschenbrenner’s deep analysis of the trajectory toward AGI

- AI 2027 — A detailed scenario forecasting the near-term future of AI capabilities

- Claude Code Remote Control — Control coding agents from your phone while away from your desk

Stay in the loop

Get notified when I publish something new. No spam, unsubscribe anytime.

No spam. Unsubscribe anytime.