I didn’t want to give another generic AI overview. I wanted to share what I’ve been living every day — the shift from writing code to orchestrating agents. Design, plan, supervise. That’s the job now. And in this new era, knowing architecture, product, and design — and learning to delegate — matters more than ever.

I co-presented this talk with Sebastián Mora at Pereira Tech Talks, the last meetup of the year. We wanted to explore how AI is transforming programming from the ground up, from our perspective — what we’re building at DailyBot and what we’re seeing in the ecosystem.

Why We Chose This Topic

Sebastián and I had been talking about this shift for months. At DailyBot we’re not just writing code anymore. We’re designing workflows, configuring AI assistants, orchestrating automations. The engineers who thrive are the ones who think in systems and know when to delegate — to a model, to an agent, to a tool. I wanted the audience to see what I see: the job description has changed, and it’s worth leaning into.

From Writing Code to Orchestrating Agents

I walked them through what this means in practice. We’ve moved from only writing code to designing agents, orchestrating LLMs, connecting RAGs, and even talking to hardware in the real world. What is an AI Engineer really? Someone who knows how to compose these pieces — models, tools, workflows — into systems that solve real problems.

It’s not about replacing programmers. It’s about elevating what we do. We spend more time on design, planning, and supervision. We think in terms of systems, not just functions. We delegate the repetitive work to agents and focus on what humans do best: reasoning, creativity, and judgment.

Architecture, Product, and Delegation

This shift makes some skills more critical than ever. Architecture — understanding how pieces fit together, how data flows, how to scale. Product — knowing what to build and why, not just how. Design — user experience, interaction patterns, what feels right. And perhaps most underrated: learning to delegate. Trusting agents to do their part while we focus on the high-leverage work.

I’ve seen this at DailyBot. The teams that move fastest are the ones who get this. It’s not about writing more lines of code. It’s about designing the right system and knowing when to hand off.

Agent Tools in This New Era

I wanted to give them a map of the tools defining this moment. Models — the foundation, getting cheaper and more capable. Agents — autonomous or semi-autonomous systems that can plan, execute, and iterate. MCPs (Model Context Protocol) — a standardized way for models to connect to external tools and data sources. Think of it like a universal adapter: instead of every AI tool building custom integrations for Slack, GitHub, databases, and APIs, MCP provides a common protocol. You write one integration, and any MCP-compatible model can use it. That’s the unlock — composable, reusable tool access for AI agents. Development environments — IDEs and platforms that are being rebuilt around AI-assisted workflows.

The ecosystem is moving fast. What matters isn’t memorizing every tool — it’s understanding the patterns: how agents reason, how they use tools, how they can be composed. That’s the skill that transfers.

An example of agent delegation from DailyBot: we built a workflow where an agent analyzes a team’s standup responses, identifies blockers, and routes them to the right person with context. The agent doesn’t just summarize — it reasons about priority, delegates follow-up actions, and monitors completion. We didn’t write explicit rules for every case. We defined the goal, gave the agent the tools (Slack API, task tracker, user directory), and it figured out the rest. That’s the shift: from writing code that handles every path to designing systems that can adapt.

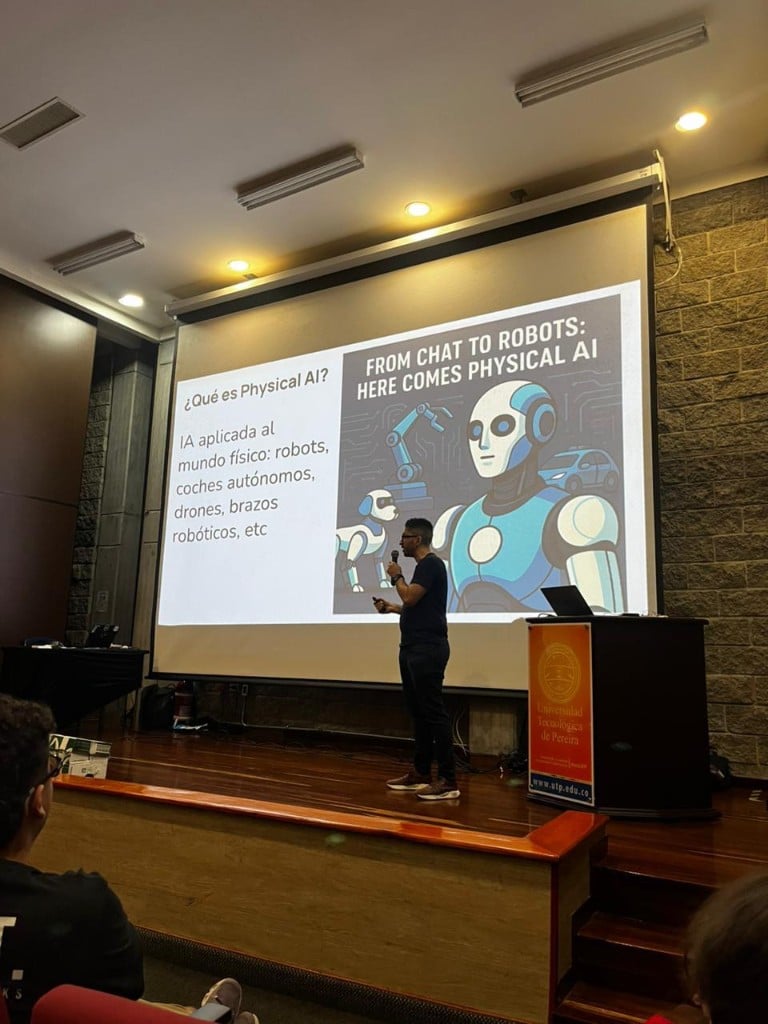

Physical AI: When Software Meets the Real World

I saved this part for last because I wanted to go beyond the screen. Physical AI — how AI is driving robotics and hardware. We’re no longer confined to virtual environments. AI can perceive the world, control robots, interact with physical systems. This is where code meets the real world: sensors, actuators, embodied intelligence.

I brought a robot with mecanum wheels to demo. Seeing it move in response to commands — that’s when it clicks. It’s not just chatbots and code generation. It’s robots that learn, systems that adapt, hardware that thinks. For those building products and startups, that opens a whole new frontier.

Event Memories

Recording

What This Means for Us

I ended with a simple idea: the tools are here. The APIs are open. The question isn’t whether AI will change how we build — it already has. The question is whether we’ll lean into it. Design, plan, supervise. Learn to delegate. Build with agents. And when it makes sense, think beyond the screen.

Thanks to everyone who came out — and to Sebastián for co-presenting. Let’s keep building.